We tackle the problem of estimating the 3D pose of an individual’s

upper limbs (arms+hands) from a chest mounted

depth-camera. Importantly, we consider pose estimation

during everyday interactions with objects. Past work shows

that strong pose+viewpoint priors and depth-based features

are crucial for robust performance. In egocentric views,

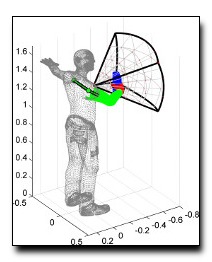

hands and arms are observable within a well defined volume

in front of the camera. We call this volume an egocentric

workspace. A notable property is that hand appearance

correlates with workspace location. To exploit this correlation,

we classify arm+hand configurations in a global egocentric

coordinate frame, rather than a local scanning window.

This greatly simplify the architecture and improves

performance. We propose an efficient pipeline which 1) generates

synthetic workspace exemplars for training using a

virtual chest-mounted camera whose intrinsic parameters

match our physical camera, 2) computes perspective-aware

depth features on this entire volume and 3) recognizes discrete

arm+hand pose classes through a sparse multi-class

SVM. We achieve state-of-the-art hand pose recognition

performance from egocentric RGB-D images in real-time.

Download: pdf

Text Reference

Grégory Rogez, James S. Supan\vc i\vc III, and Deva Ramanan.

First-person pose recognition using egocentric workspaces.

In

CVPR. 2015.

BibTeX Reference

@inproceedings{RogezSR_CVPR_2015,

AUTHOR = "Rogez, Gr{\'e}gory and Supan{\vc}i{\vc} III, James S. and Ramanan, Deva",

TITLE = "First-Person Pose Recognition using Egocentric Workspaces",

BOOKTITLE = "CVPR",

YEAR = "2015",

tag = "people"

}