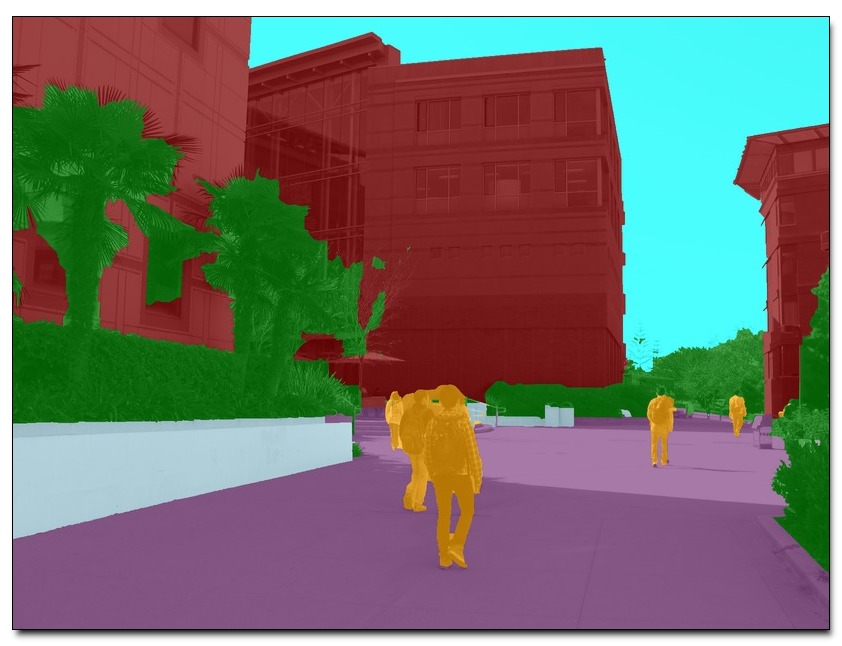

Contextual information can have a substantial impact on the performance of visual tasks such as semantic segmentation, object detection, and geometric estimation. Data stored in Geographic Information Systems (GIS) offers a rich source of contextual information that has been largely untapped by computer vision. We propose to leverage such information for scene understanding by combining GIS resources with large sets of unorganized photographs using Structure from Motion (SfM) techniques. We present a pipeline to quickly generate strong 3D geometric priors from 2D GIS data using SfM models aligned with minimal user input. Given an image resectioned against this model, we generate robust predictions of depth, surface normals, and semantic labels. Despite the lack of detail in the model, we show that the precision of the predicted geometry is substantially more accurate than other single-image depth estimation methods. We then demonstrate the utility of these contextual constraints for re-scoring pedestrian detections, and use these GIS contextual features alongside object detection score maps to improve a CRF-based semantic segmentation framework, boosting accuracy over baseline models.

Download: pdf

Text Reference

Raúl Díaz, Minhaeng Lee, Jochen Schubert, and Charless C. Fowlkes.

Lifting GIS maps into strong geometric context for scene understanding.

WACV, 2016.

BibTeX Reference

@article{DiazLSF_WACV_2016,

author = "D{\'\i}az, Ra{\'u}l and Lee, Minhaeng and Schubert, Jochen and Fowlkes, Charless C.",

title = "Lifting {GIS} Maps into Strong Geometric Context for Scene Understanding",

journal = "WACV",

year = "2016",

tag = "object_recognition, grouping, geometry"

}