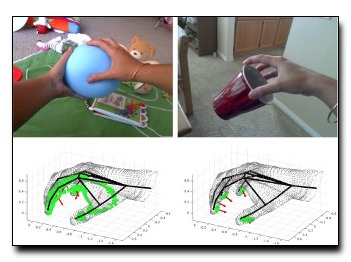

We analyze functional manipulations of handheld objects,

formalizing the problem as one of fine-grained grasp

classification. To do so, we make use of a recently developed

fine-grained taxonomy of human-object grasps. We introduce

a large dataset of 12000 RGB-D images covering 71

everyday grasps in natural interactions. Our dataset is different

from past work (typically addressed from a robotics

perspective) in terms of its scale, diversity, and combination

of RGB and depth data. From a computer-vision perspective,

our dataset allows for exploration of contact and force

prediction (crucial concepts in functional grasp analysis)

from perceptual cues. We present extensive experimental

results with state-of-the-art baselines, illustrating the role

of segmentation, object context, and 3D-understanding in

functional grasp analysis. We demonstrate a near 2X improvement

over prior work and a naive deep baseline, while

pointing out important directions for improvement.

Download: pdf

Text Reference

Grégory Rogez, James Steven Supan\vc i\vc III, and Deva Ramanan.

Understanding everyday hands in action from rgb-d images.

In

IEEE International Conference on Computer Vision. 2015.

BibTeX Reference

@INPROCEEDINGS{RogezSR_ICCV_2015,

author = "Rogez, Gr{\'e}gory and Supan{\vc}i{\vc} III, James Steven and Ramanan, Deva",

booktitle = "IEEE International Conference on Computer Vision",

title = "Understanding Everyday Hands in Action from RGB-D Images",

year = "2015",

tag = "people"

}