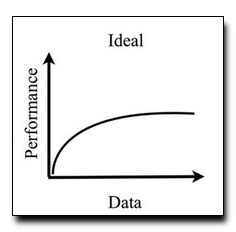

Datasets for training object recognition sys-

tems are steadily increasing in size. This paper inves-

tigates the question of whether existing detectors will

continue to improve as data grows, or saturate in perfor-

mance due to limited model complexity and the Bayes

risk associated with the feature spaces in which they

operate. We focus on the popular paradigm of discrimi-

natively trained templates defined on oriented gradient

features. We investigate the performance of mixtures of

templates as the number of mixture components and

the amount of training data grows. Surprisingly, even

with proper treatment of regularization and “outliers”,

the performance of classic mixture models appears to

saturate quickly (∼10 templates and ∼100 positive train-

ing examples per template). This is not a limitation of

the feature space as compositional mixtures that share

template parameters via parts and that can synthesize

new templates not encountered during training yield

significantly better performance. Based on our analy-

sis, we conjecture that the greatest gains in detection

performance will continue to derive from improved rep-

resentations and learning algorithms that can make

efficient use of large datasets.

Download: pdf

Text Reference

Xiangxin Zhu, Carl Vondrick, Charless C Fowlkes, and Deva Ramanan.

Do we need more training data?

International Journal of Computer Vision, pages 1–17, 2015.

BibTeX Reference

@article{ZhuVFR_IJCV_2015,

author = "Zhu, Xiangxin and Vondrick, Carl and Fowlkes, Charless C and Ramanan, Deva",

title = "Do We Need More Training Data?",

journal = "International Journal of Computer Vision",

pages = "1--17",

year = "2015",

publisher = "Springer",

tag = "object_recognition"

}